At Box, we’ve long used Jenkins as our primary continuous integration solution. Aside from frequent, regular builds to ensure projects are up to scratch we also rely heavily on the automated building of Pull Requests (PRs) before allowing them to be merged.Recently though, we encountered a scenario where the out-of-the-box setup just didn’t quite cut it.

The situation: one of our projects involved creating an application that used Angular JS for the front-end with Symfony for the back-end. While the end result from a user’s perspective is one single application, from a development point of view this is essentially two separate applications, each with their own dependencies, build requirements, test suites, etc.

We considered a few different ways of handling this:

Single repository with a single Jenkins job: potentially the simplest solution, but one that is flawed. We run our PHP builds against Jenkins slaves that are running our target versions of PHP, so it makes sense that we should also do the same for other technologies, in this case, Node.js. This project however, involves both. It’s clearly not practical to have a different Jenkins slave for every combination of PHP and Node.js, nor is it practical to have separate slaves dedicated to each project’s target environment(s). The result is a compromise: either run the builds against the correct PHP slave or the correct Node.js slave. Needless to say, we didn’t choose this solution.

Separate repositories and Jenkins jobs: another seemingly straightforward way of handling this is to have the Angular and Symfony applications versioned in separate repositories, each with their own Jenkins jobs that are run on the appropriate Jenkins slaves. This works ok, but is less than ideal as there are two separate repositories to maintain, as well as the fact that our ideal build process would be one single build result that’s reflective of both applications: a single pass or fail after pull-request is opened or updated. With a scarcity of other apparent solutions, we seriously considered this method as despite the extra overhead involved it is a clear improvement over the first method.

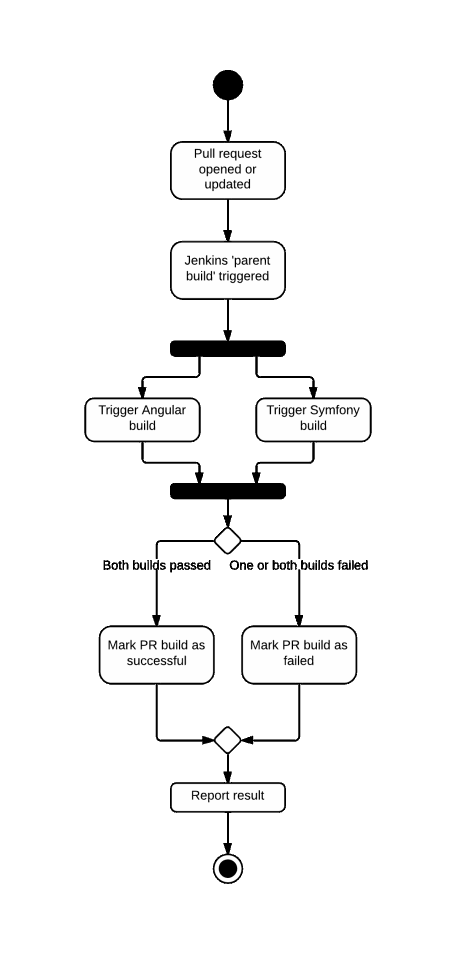

Our solution: after trawling the depths of the internet, I came across a Jenkins plugin that seemed to solve our problem. While the overall functionality of the plugin is beyond the scope of both our requirements and this blog post, put simply it allows the creation of a parent job that orchestrates one or more child jobs using a dedicated DSL and then reports the result.What does this mean? Well, for starters it means having a third Jenkins job just to kick off the two real jobs. As far as I’ve noticed though, this is the only real downside to an otherwise elegant solution. The basic flow of the build process can be seen in the below UML diagram, but put simply:

- PR is opened or updated

- The parent job is triggered which in turns triggers the Symfony and Angular application jobs

- If both Symfony and Angular builds complete successfully, then the parent job also reports success

- If either the Symfony or Angular (or both) builds fail, the parent job also reports failure

- The pull request is updated with the pass or fail status of the parent job

So, as a result we have:

- One repository containing both Angular and Symfony application

- One single build result that is reflective of both the Angular and Symfony builds

In short, exactly what we wanted! As a bonus, this all turns out to be very easy to set up…

Setting up Jenkins Build Flow

1. Install the GitHub Pull Request Builder and Build Flow plugins

First off, ensure you have both the GitHub Pull Request Builder and the Build Flow plugins installed on your Jenkins server.

2. Create your Jenkins jobs

If you haven’t done so already, create your real Jenkins jobs (the ones that actually build and test your application) and assign them to run on the required Jenkins slaves.

3. Create the Build Flow job

So far, so normal. Next, we’re going to create a special kind of Jenkins job, a Build Flow job.

As already described, this job exists purely to run when a PR is opened or updated and is responsible for kicking off our real builds. It’s created like any other Jenkins job, though as you’ll see it’s configured a little differently…

For brevity, I’m not going to dwell on how to trigger the build flow job when a PR is opened, as this is covered by the existing plugin documentation. What I will focus on however, is the very short, very simple bit of code needed to run the multiple jobs in parallel. Let’s take a look at the code:

def sha1 = build.environment.get('sha1')What’s going on here? Well, the GitHub PR plugin stores the SHA of the commit that needs to be built in an environmental variable ( sha1). In turn, we need to pass this SHA to any child builds we trigger.

It’s the parallel block where the interesting stuff happens. Most of this should look fairly self-explanatory, but essentially we’re saying “run these two builds (that we specify by name) in parallel”.

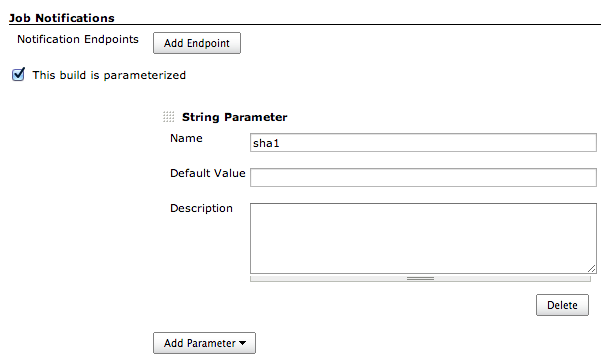

You’ll notice that we’re also passing in the commit SHA as a parameter (conveniently also called sha1 ) to each build. This means we have to now modify these builds to become parameterised builds.

For every build you need to run, Configure it, check the ‘This build is parameterised’ checkbox and enter sha1 as the name.

4. Configure your source control

We also need to configure the Source Control section of our builds to use this SHA. In the Source Control Management section, in Branches to Build we just need to use the sha1 parameter that we’ve made available.

parallel (

{ build("my-project-php-build", sha1: sha1) },

{ build("my-project-node-build", sha1: sha1) }

)And that’s it!

Now, when we open or update a PR, our builds run and the PR is passed or failed as expected. If it should fail (which of course never happens), then the Build Flow job will contain information about which job(s) failed (including links to the relevant jobs).

At Box UK we have a strong team of bespoke software consultants with more than two decades of bespoke software development experience. If you’re interested in finding out more about how we can help you, contact us on +44 (0)20 7439 1900 or email info@boxuk.com.